Batch reactors, used in specialty chemical and pharma industries, are often inefficient due to manual steps and reactive control systems. AI optimization, using real-time pattern recognition and predictive modeling, transforms these reactors into autonomous systems. This leads to measurable improvements in yield, purity, cycle time, and energy efficiency—delivering mid-single-digit gains in performance and profitability without needing major equipment replacements.

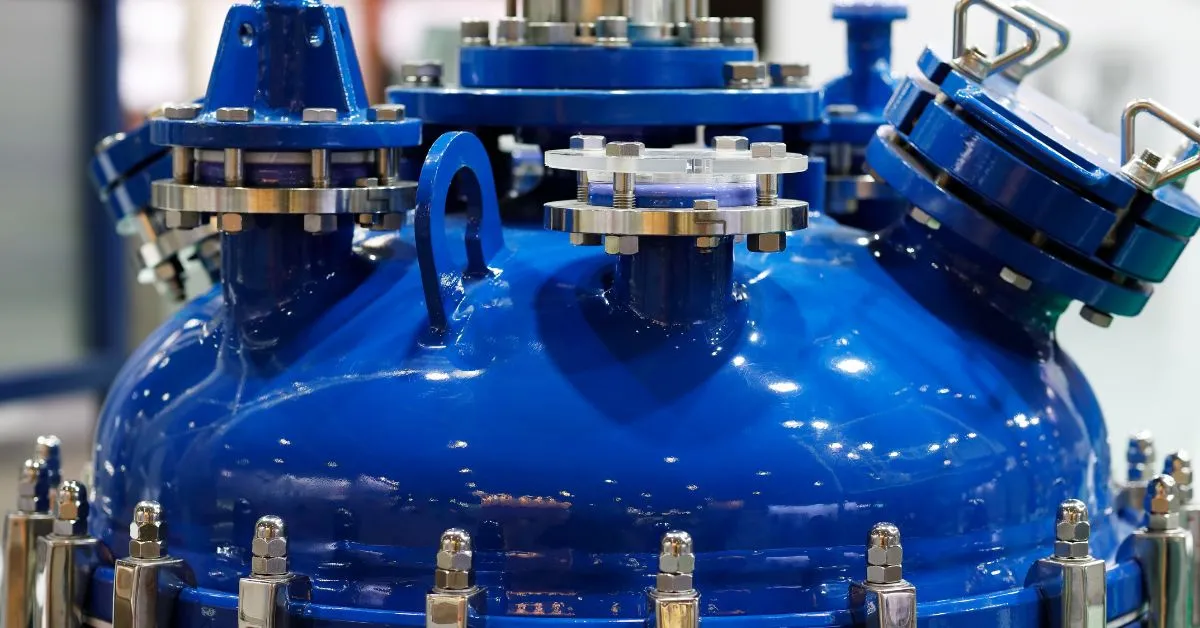

Batch reactors keep the specialty-chemical and pharmaceutical worlds flexible, yet that flexibility hides costly inefficiencies. Every run pauses for manual charging, cleaning, and quality checks, a time when equipment sits idle and labor costs climb.

Scale-up rarely means a bigger vessel; it usually means buying more of them, multiplying capital and operating expense. With 26% of chemical companies prioritizing equipment replacement, many saw this as the right moment to rethink their core assets. Heat-transfer limits and sluggish control loops further erode yield and drive energy waste, especially during exothermic reactions that cannot be pushed to their true potential.

AI optimization changes that equation. Plants that embed real-time pattern recognition, predictive modeling, and autonomous control into their reactors consistently achieve mid-single-digit improvements in yield, cycle time, and energy use—gains that multiply across hundreds of annual batches.

If you’re aiming to improve profitability in batch reactor operations, four core metrics reveal where it counts:

To drive real improvements across these metrics, machine learning models require clean, synchronized historian data. But common issues—like missing sensors, inconsistent timestamps, or mismatched units—can stall model training and limit results.

The solution starts with aligning tag names across operations, maintenance, and lab systems, so all teams work from the same data set. Use automated data profiling to catch outliers, sensor drift, and dead tags before they compromise model accuracy.

When your data meets industrial-quality standards, AI systems learn faster, deliver more reliable insights, and keep these four key performance indicators moving in the right direction.

Manual recipes and familiar PID loops have kept batch reactors running for decades, but you pay a steep price for that simplicity. Operators must follow time-based steps and wait for sensors to register changes before taking action. Because PID control is reactive, operators sometimes choose more conservative setpoints to ensure safety, which can widen safety margins and slow down operations, but this is not inherent to PID control itself.

The resulting lag shows up first in safety. When a highly exothermic reaction spikes, a jacket governed by a conventional PID loop often cannot shed heat before temperature overshoots, raising the specter of thermal runaway and off-spec product losses. Even during normal operation, single-point measurements can miss local hot spots, leaving you with uneven conversion across the vessel.

Efficiency suffers next. To avoid oscillations, PID tuning errs on the side of caution, stretching ramp rates and elongating batch cycles. Predictive control studies show that PID-driven recipes require wider temperature cushions and longer hold times, directly lowering throughput and raising utility costs. As labor and energy accumulate during those extended hours, every extra minute erodes margin.

Intelligent optimization flips this equation. By recognizing patterns in real time and adjusting multiple variables simultaneously, it tightens safety limits without widening margins, reacts faster than any human-tuned loop, and pushes each batch toward its optimal endpoint. The gap between what a reactive PID can maintain and what adaptive intelligence can deliver represents untapped profitability that traditional control simply cannot reach.

AI optimization transforms batch reactors by combining real-time pattern recognition, predictive modeling, and autonomous control capabilities. Unlike traditional control systems that react to changes after they occur, AI systems anticipate process dynamics and make proactive adjustments that maximize yield while minimizing energy consumption.

The following sections explore how these intelligent technologies work together to extract untapped value from existing equipment without major capital investments.

The moment you stream high-frequency temperature, pressure, and concentration data into machine learning algorithms, they begin mapping the normal rhythm of your batch. Over time, the system learns to recognize the signature of your Golden Batch—the ideal run in terms of yield, purity, and efficiency—and uses it as a dynamic benchmark.

When a signal drifts, even a subtle stirrer power wobble, the model flags it before it erodes yield or purity. Plants using these techniques have cut non-productive time by spotting deviations in minutes rather than waiting for sample results, trimming overall cycle time and energy use in the same stroke. Because the model watches every variable simultaneously, it picks up multivariate patterns that a single PID loop or an experienced operator would miss.

Smart algorithms blend first-principles heat-balance equations with reinforcement learning (RL) trained on your historian. By running thousands of virtual batches, the model forecasts how different temperature ramps or feed rates will play out, before you commit raw material.

This forward look lets operators release product as soon as quality targets are met, often shaving hours off a batch. Because predictions refresh in real time, you can test “what-if” scenarios without risking off-spec product.

When those predictions feed directly back to the distributed control system (DCS), the loop closes. Intelligent techniques, validated against safety constraints from the outset, write optimal setpoints for jacket temperature or reactant feed in real time, tightening control far beyond conservative manual recipes.

Field trials have kept exothermic reactions on target while boosting conversion. Operators still review every change through an explainable dashboard, but the heavy lifting, constant recalculation, and adjustment runs autonomously, delivering steadier quality and higher throughput without stretching your team.

You’ll move faster and see clearer value when you follow a disciplined five-phase path:

Following this structured implementation approach ensures your AI optimization project delivers measurable returns while maintaining operational safety and building team confidence. Each phase builds upon the previous one, creating a foundation for sustainable performance improvement that continues long after initial deployment.

Judge intelligent optimization like any capital project: compare incremental profit to investment cost. The ROI equation stays simple: annual benefit minus implementation cost, divided by implementation cost.

Translate each performance improvement into dollars. Extra yield becomes an additional saleable product by multiplying the yield increase times contribution margin. Energy savings come from reduced utility costs by calculating baseline consumption minus post-optimization consumption, then multiplying by your tariff rate. These improvements also support profitable decarbonization efforts, a growing focus in the chemical industry.

Fewer off-spec batches free up cash once tied to rework by multiplying quality improvement times scrap cost. Shorter cycles add throughput by calculating time savings times annual batches times profit per batch.

Track performance by comparing at least one full quarter of baseline data with a matched post-deployment window. Continuous KPI dashboards prove both the immediate improvement and the compounding value as the model learns from each batch.

When you layer Imubit’s Closed Loop AI Optimization solution on top of your existing distributed control system (DCS), the model learns plant-specific operations in real-time and writes optimized setpoints back to the DCS every few seconds. That continuous feedback delivers real-time action rather than periodic advice, driving efficiency and sustainability without forcing disruptive equipment changes.

Unlike traditional advanced process control solutions that depend on static equations and conservative safety margins, this platform combines deep reinforcement learning (RL) with first-principles understanding. The result is an autonomous system that adapts as feed quality, ambient conditions, or production targets shift, keeping reactors at the edge of their safe operating envelope while trimming energy use.

Beyond technology, Imubit delivers a full-service engagement: data readiness audits, operator coaching, continuous model maintenance, and expansion roadmaps that let you grow profits batch after batch—without overburdening front-line operations teams.

Smart optimization delivers measurable improvements in yield, quality, cycle time, and energy efficiency across batch chemical reactors. The path forward starts with understanding your plant’s specific constraints and opportunities.

For process industry leaders seeking sustainable efficiency improvements, Imubit’s Closed Loop AI Optimization solution offers a data-first approach grounded in real-world operations. The Imubit Industrial AI Platform learns your plant-specific operations, adapting in real-time to deliver the multi-million-dollar annual improvements outlined throughout this article.

Ready to explore what’s possible? Get a Complimentary Plant AIO Assessment to map these improvements directly onto your operations and data infrastructure. The assessment carries no risk, and the potential returns make the conversation worthwhile.